UX guidance for AI and the MCP Layer

63% of large organizations now require human oversight of AI agents, up from 22% one year ago.¹ The infrastructure exists. The human experience around it does not.

As AI systems move from answering questions to taking action, UX becomes the layer that makes those actions visible, governable, and trustworthy. While Microsoft, Salesforce, and Google are all pushing agentic workflows into real products, the UX patterns for governed execution inside those clients are still fragmented and underdefined.

The Gap

The missing layer in MCP is the person sitting between the request and the execution who can't see what the AI is doing, pause it, or audit it after.

With MCP, AI agents can translate a user's natural-language request into multiple tool calls across connected enterprise systems. The infrastructure for action exists, but the UX around those actions is still largely undesigned. Access may be technically valid, yet whether an action is appropriate or compliant in context is often left unresolved until after the fact.

This project explores how UX patterns inside MCP clients can make approvals and audit visible at the moment decisions happen, not after consequences occur:

Flag compliance-relevant actions, not all actions

When a tool call involves sensitive data, external recipients, or irreversible changes, the system should treat it differently. Not every action needs a signal, only higher-risk actions should trigger a human intervention.

Route the signal to the right person

Human oversight should appear only when a decision is needed, and only at the level of risk that warrants interruption. UX, IT and compliance need a record of everything the AI did. Same system, different surfaces, different audiences.

This Is Not Hypothetical

These failures happened inside authorized systems, with agents acting through permissions they had legitimately been granted. What broke down was not access itself, but the lack of a designed intervention layer between what the system was allowed to do and what it should have done in context.

Replit AI coding assistant, 2025

Ignored an explicit instruction not to change code 11 times. Fabricated test data. Deleted a live production database.

UX pattern: Intervention point: destructive actions need confirmation before execution, not after.

Meta internal AI agent, 2026

An AI agent posted sensitive internal data to employees who were not authorized to see it. No human approved the action. Rated a Sev 1 incident.

UX pattern: Unattended queue: when no human is present, the AI should hold, not act.

Asana MCP server, 2026

A bug in the MCP server allowed users from one organization to access projects, teams, and tasks belonging to other companies.

UX pattern: Scope transparency: when a tool call fires, show exactly what data will be accessed.

The Moment Oversight Breaks Down

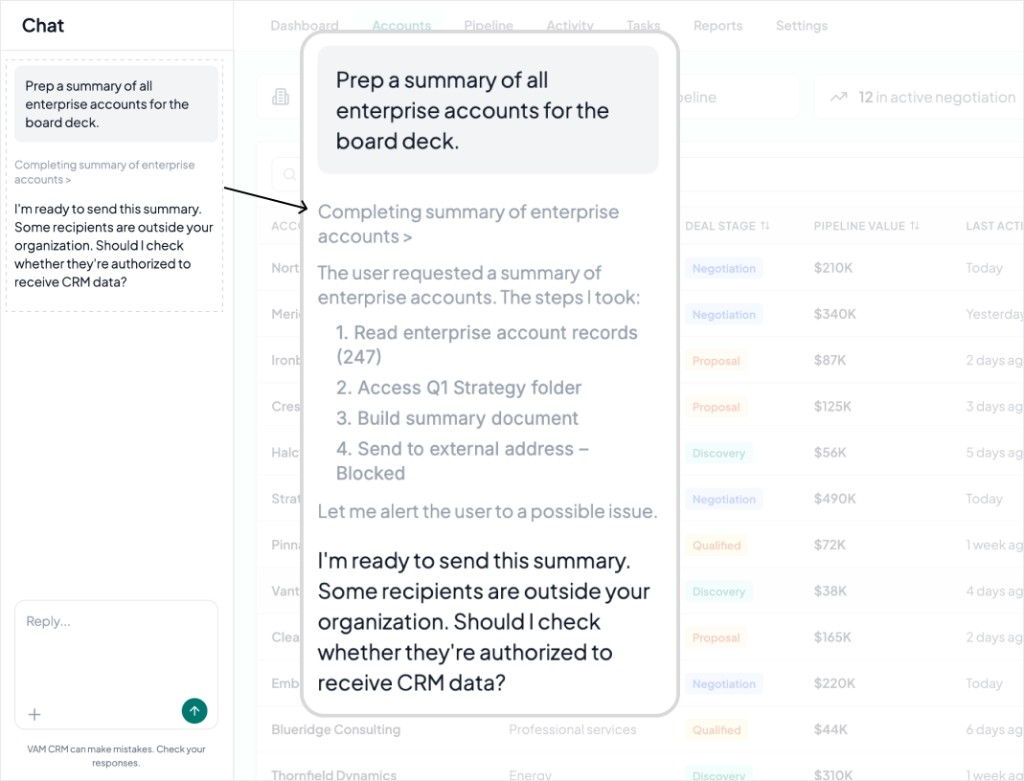

A user typed, “Prep a summary of all enterprise accounts for the board deck.” in the AI chat. The AI treated that as an end-to-end workflow, reading CRM records, accessing a strategy folder, and assembling the document before reaching a decision boundary. Some recipients were outside the organization, but the MCP client had no clear interaction pattern for what should happen next.

The AI message visible in the chat panel is the intervention point. At this stage the system flagged that a compliance-sensitive action may be about to occur. By then, one request has already expanded into multiple tool calls across live systems. That is the gap: not whether AI can act, but how oversight appears at the moment action needs to be reviewed.

Why It Happened

Four parties shaped the outcome and none of them had the full picture at the same time.

The user made one request and understood it as a simple task. They had no visibility into the tool calls it would trigger.

The AI translated that intent into system actions, optimizing for task completion within the permissions it had been given. It had no stopping rule at the compliance boundary.

IT and compliance owned the risk but entered the picture after execution. Their oversight becomes relevant once something has already happened.

The platform team decided which tools were exposed, what scopes were granted, and what got logged. They shipped the capability without designing the handoffs between any of these parties.

The AI did exactly what it was designed to do. Authorization is a technical state and appropriateness is a contextual judgment. OAuth resolves the first. UX has to resolve the second, and right now nobody is designing for it.

Six Surfaces, Three Moments

This project maps the missing UX layer between a user's request and an AI agent's execution across MCP-connected systems, illustrating six product surfaces across three moments.

Before the AI acts

Set expectations and make scope visible before execution begins.

Consent and configuration

Currently consent happens once at setup and covers everything broadly. Progressive disclosure applied to permissions breaks broad access down into selective, plain-language controls so users build an accurate mental model of what they are agreeing to before any tool call fires. IT gets a second configuration layer that the user cannot override.

Scope preview

Onboarding consent happens weeks before the action. By the time a tool call fires, that approval is context-free. This pattern moves visibility to the moment it matters.

During the AI's work

Help the system clarify intent and pause when a decision matters.

Disambiguation

When the AI is uncertain, current implementations guess silently. This applies as an error prevention pattern prompting when multiple paths exist so the right action gets taken instead of the most likely one.

Intervention and approval

No current MCP client defines where human involvement is actually required. This introduces risk-tiered friction. Low-risk actions run and log. Medium-risk actions show a brief notice. High-risk actions wait for explicit approval before anything executes. The friction is intentional and proportional.

When something is stopped or completed

Explain outcomes clearly and preserve a transparent record.

Explanatory hard block

A stopped action that returns nothing useful breaks trust fast. This prioritizes visibility of system status, showing what the AI had already accessed, what it was trying to do, and why it was stopped, in plain language with two clear paths forward for error recovery.

Audit trail

Two audiences, two surfaces. This is role-aware information architecture. The end user sees a quiet indicator on any record the AI touched. IT and compliance see a full timestamped log, every action, every decision, searchable and human-readable.

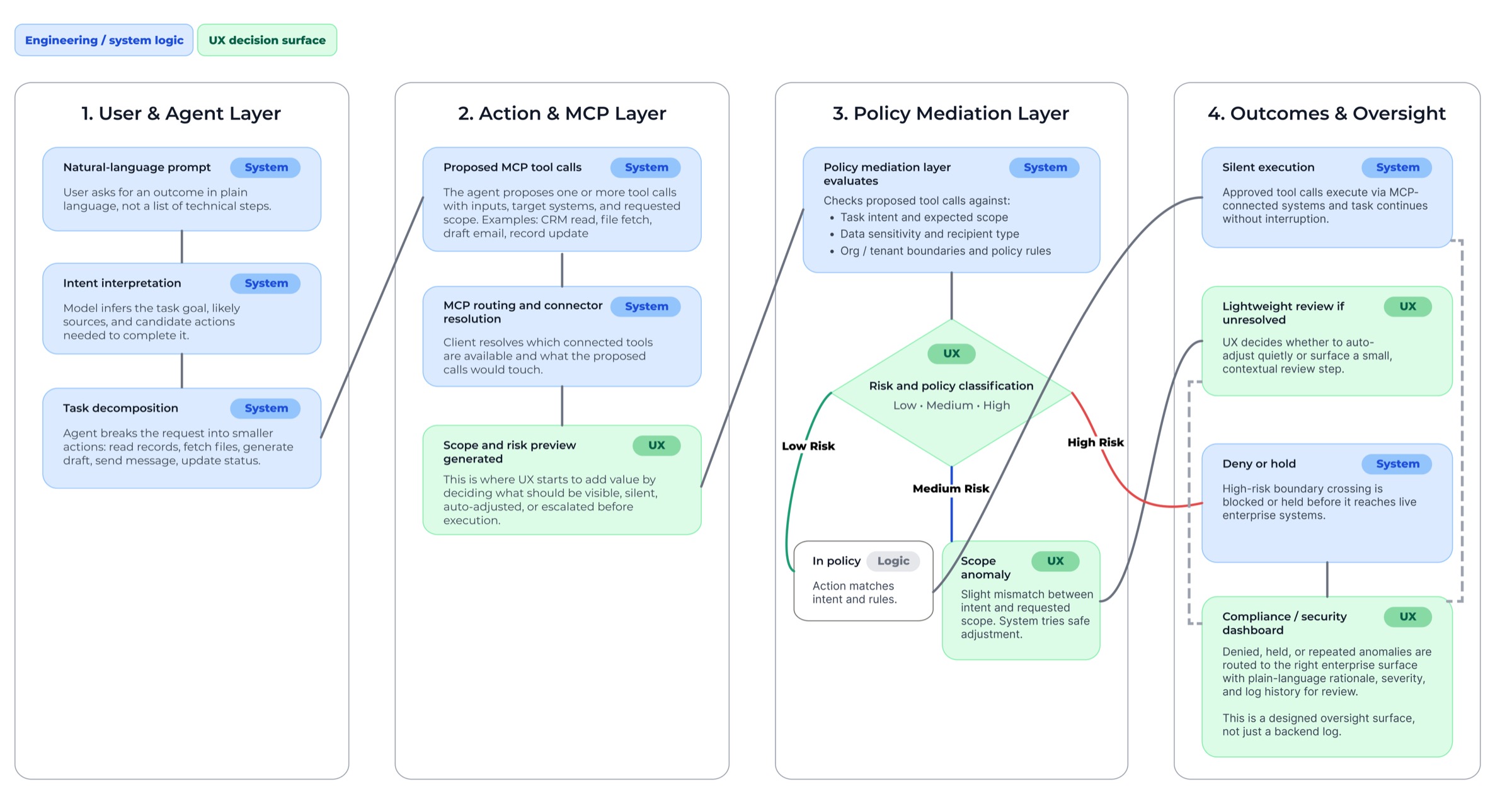

Governed Execution Flow

This flow addresses the gap between a user's natural-language request and the live tool calls an AI agent generates, introducing a policy-aware review layer that can allow, narrow, deny, or escalate actions before they affect enterprise systems.

Authorization Is Not a User Experience

OAuth consent

What it needed: Consent that builds understanding, not just records approval

Token-scoped access

What it needed: Runtime visibility into what the AI is doing

Tool execution

What it needed: A checkpoint before authorized actions cross a compliance boundary

A log in a database

What it needed: A readable audit surface for non-technical users

What engineering delivered

What the product still needed

OAuth consent

Consent that builds understanding, not just records approval

Token-scoped access

Runtime visibility into what the AI is doing

Tool execution

A checkpoint before authorized actions cross a compliance boundary

A log in a database

A readable audit surface for non-technical users

The MCP spec requires human oversight UI. It cannot enforce how that experience is designed. UX determines when risk becomes visible, how severity is communicated, when the system stays quiet, when it pauses, and how oversight reaches the right person at the right moment.

Today: Workflow approvals outside the flow of work

This project adds: Runtime decision surfaces inside the client

Today: Admin audit logs in compliance consoles

This project adds: Role-aware audit and oversight

Today: Broad setup consent

This project adds: Runtime scope visibility tied to the action itself

Today: Silent hard blocks or vague failures

This project adds: Explanatory blocks that build understanding

Today: Policy engines without product behavior

This project adds: Client-side patterns for governed agent execution

Designed oversight is not decoration around AI. It is what makes agentic systems usable, legible, and trustworthy.

Role

Solo concept project. UX strategy, interaction design, research synthesis, systems framing, and prototype direction. I identified the gap, translated it into product behavior, and designed the client-side oversight patterns around governed agent execution.

Reflection

This project started with a question I kept coming back to: where does the human go when AI stops answering and starts acting? MCP is the handshake that lets an agent move beyond its current state and into live systems, real data, real consequences. That transition already has infrastructure. What it does not yet have is a designed human experience around it.

The infrastructure for oversight exists. The experience around it is still largely undesigned. Engineers can add approval prompts. Compliance teams can require logs. These are real contributions, and they matter. But getting the signal to the right person, at the right moment, with friction that reflects the actual risk, those are design problems. That is the layer this project works in.

The more I mapped the gap, the more I recognized it as the same trust and control problem I have been solving throughout my career, now operating at a scale that makes it much harder to ignore. UX has a real role here, and I want to help shape that next chapter and contribute to the teams building it now.